Bringing State-of-the-Art AI Models to Intel® NPUs

Partnering with Intel to optimize whisper-large-v3-turbo, qwen-3, and phi-4-mini for NPU acceleration on Intel® AI PCs

At Fluid Inference, our goal is simple: make deploying and running local AI models as easy as running application code. For too long, developers have had to rely on cloud infrastructure even for basic AI tasks. But the landscape is rapidly evolving—Intel's new AI PCs with integrated NPUs are making it possible to run sophisticated AI workloads directly on consumer devices.

We're seeing an explosion of developer interest in deploying AI directly into their applications. From indie developers to engineers at major tech companies, there's unprecedented demand for native AI solutions. A fundamental shift is happening: developers are becoming much more educated about AI models, and hardware like Intel's NPUs is finally powerful enough to make local deployment practical.

This is why our collaboration with Intel is so exciting. Together, we optimized transformer models including Whisper v3 Turbo, Qwen3, and Phi-4-mini to harness the full potential of Intel® Core™ Ultra processors with integrated NPUs. These models, typically associated with cloud infrastructure and GPU-heavy workloads, now deliver real-time performance directly on Intel AI PCs.

For more details, read the full article here.

Our Approach

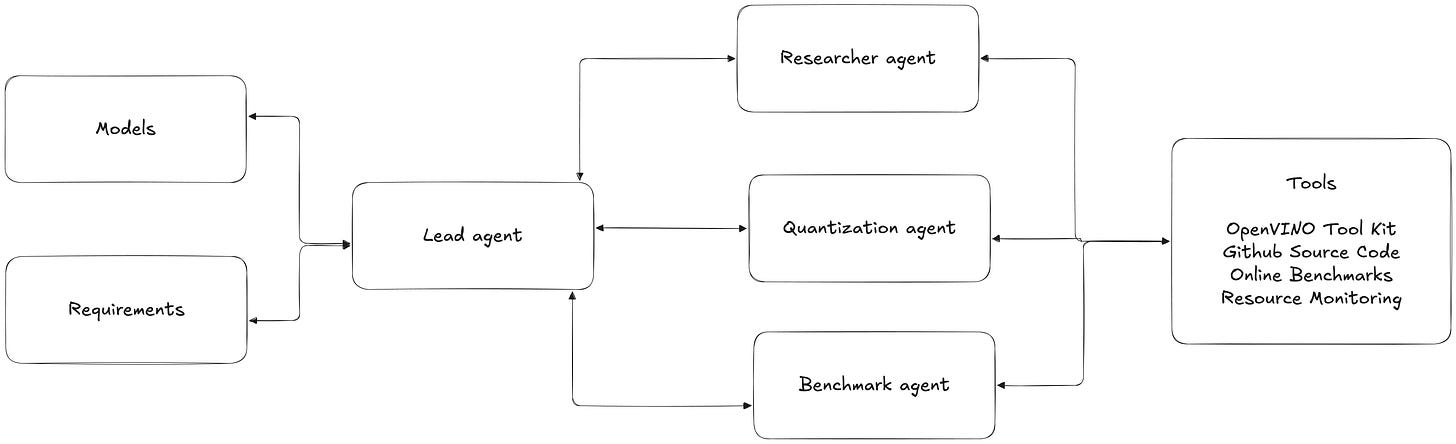

We applied our agent-based optimization system to tackle the complexity of adapting transformer models for Intel NPU execution. Our system takes three key inputs: the models to optimize (Whisper v3 Turbo, Qwen3, Phi-4-mini), Intel NPU hardware specifications, and performance requirements.

The system uses a lead agent that coordinates the entire optimization pipeline, working with specialized agents to handle different aspects of the process. A researcher agent analyzes model architectures and identifies NPU-specific optimization opportunities. The optimization & quantization agent implements these transformations using tools like OpenVINO™, handling precision reduction and graph optimizations. Finally, a benchmark agent validates performance on actual hardware, measuring latency, throughput, and accuracy.

This orchestrated approach allows for rapid iteration and optimization. The agents work together, sharing results and refining their strategies based on real hardware performance data. This enabled us to optimize all three transformer models for Intel NPUs in just a matter of weeks, achieving the impressive performance gains detailed below.

Results

Whisper v3 Turbo runs 40% faster on Intel NPUs than on CPU (down from 0.31s to 0.19s per segment), and we didn't sacrifice any accuracy to get there. The models process audio in real-time, which is crucial for live applications.

For language tasks, Qwen3 and Phi-4-mini deliver about 70-75% of GPT-4's quality on summarization and Q&A, pretty impressive for models running entirely offline. Power consumption dropped significantly compared to GPU inference, though exact numbers vary by workload.

These aren't just benchmark numbers. A Fortune 100 company is already using our NPU-optimized models in their next-generation AI application.

Resources for Developers

All our NPU-optimized models are available on Hugging Face. We're also building a native .NET library to make it easier to deploy GenAI workloads in Windows applications.

If you're looking to deploy local AI solutions—whether with your own models or open-source ones—reach out through fluidinference.com. We'd love to help you bring AI directly to your users' devices.